Last Updated: 03/04/2026

The next time you’re scrolling Facebook and see an AI-generated image of Jesus Christ made out of shrimp, surrounded by thousands of comments that all say “Amen,” you’re looking at the Dead Internet Theory in action. Whether you realize it or not.

The Dead Internet Theory is the idea that most of what we experience online is no longer created by real people. Instead, it’s generated by bots, algorithms, and AI systems. The humans still using the internet are increasingly interacting with machines pretending to be other humans. What started as a fringe conspiracy theory is now backed by enough real data to make even skeptics uncomfortable.

Where Did This Come From?

The theory started gaining traction around 2016 on various forums and imageboards where users noticed an uptick in repetitive, low-quality content that felt… off. It hit the mainstream in 2021 when a user named “IlluminatiPirate” posted a lengthy thread titled “Dead Internet Theory: Most Of The Internet Is Fake” on the Agora Road’s Macintosh Cafe forum. The Atlantic picked it up later that year, and it’s been part of the broader internet discourse ever since.

The original theory has two parts. The first is the observable claim: that organic human activity online has been displaced by bots, AI-generated content, and algorithmically curated search results. The second is the conspiracy part: that governments and corporations are doing this intentionally to manipulate the population.

The first part is where the real conversation is happening in 2026, because the data has gotten hard to ignore.

The Numbers Are Getting Uncomfortable

Here’s where it stops feeling like a conspiracy theory and starts feeling like a status report.

According to the 2025 Imperva Bad Bot Report, automated bot traffic surpassed human-generated traffic for the first time in a decade, making up 51% of all web traffic in 2024. That’s not a fringe estimate. Imperva tracks this across thousands of domains and industries globally. The shift is largely driven by the rise of AI and large language models, which have made it trivially easy to create and scale bots.

And it’s not just traffic. Ahrefs analyzed 900,000 newly created web pages in April 2025 and found that 74.2% of them contained AI-generated content. Only about a quarter of new web content is entirely human-written. On X (formerly Twitter), roughly 64% of accounts are likely bots. LinkedIn’s long-form posts are 54% AI-generated. Even Zillow’s real estate reviews jumped from 3.6% AI-generated in 2019 to 23.7% in 2025.

We went from “some of the internet is fake” to “most of the new internet is at least partially synthetic” in about three years.

What Does This Actually Look Like?

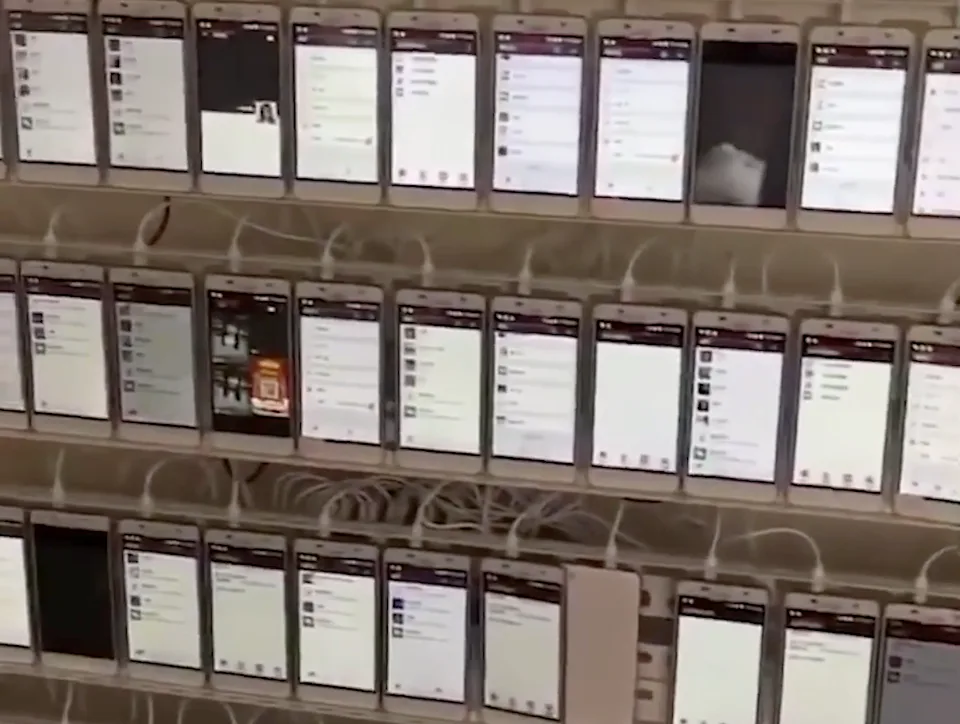

You’ve probably already seen it without putting a name to it. AI-generated religious imagery going viral on Facebook. Not just Shrimp Jesus, but entire ecosystems of accounts posting bizarre AI mashups that rack up thousands of AI-generated comments. Content farms churning out hundreds of articles a day with minimal human oversight, flooding search results with plausible-sounding information that may or may not be accurate. Dating apps dealing with waves of sophisticated AI-generated profiles, some running scams, others just inflating platform numbers.

But the most insidious version is subtler. It’s the LinkedIn post that sounds like every other LinkedIn post. It’s the product review that reads perfectly but says nothing specific. It’s the search result that answers your question in a way that feels technically correct but oddly hollow.

AI content doesn’t always announce itself with six-fingered hands and Shrimp Jesus. Sometimes it just makes the internet feel slightly more beige.

Is Any of This Actually a Conspiracy?

This is where it’s worth being precise. The observable facts, that bots generate a majority of web traffic, that AI content has flooded the web, that a handful of corporations control most of what we see online, are well documented. Those aren’t conspiracy theories. Those are data points from Imperva, Ahrefs, and the platforms themselves.

The conspiracy part, that this is a coordinated effort by governments and corporations to deliberately replace human activity, is where it falls apart for most serious observers. We’re probably overestimating AI’s direct persuasive power while underestimating its capacity to erode institutional trust. The internet isn’t being killed by a shadowy cabal. It’s being gradually hollowed out by economic incentives that reward volume over quality and engagement over authenticity.

The irony isn’t lost on anyone that Sam Altman, the CEO of OpenAI, has publicly acknowledged bot proliferation concerns on X. The very platform his company’s technology has helped flood with synthetic content.

What This Means If You Use the Internet (So, Everyone)

Even setting aside the conspiracy framing, the practical implications are real. Trust is eroding. When more than half of web traffic is automated and three-quarters of new content has an AI fingerprint, the default assumption shifts from “this is probably real” to “this might not be.”

For anyone running a business online, especially small businesses competing for visibility, this matters. The economic model that funded quality content creation is under real pressure. Publishers are losing ad revenue, organic search traffic is declining across the board, and Gartner predicts search engine volume will drop another 25% by late 2026 as users shift to AI chatbots.

For regular users, it means digital literacy isn’t optional anymore. You need to develop instincts for what’s real and what’s generated, because the tools to distinguish them are imperfect and the volume of synthetic content is only increasing.

How to Tell What’s Real

There’s no foolproof system, but here are some things that actually help.

Look for specificity and personal experience. AI-generated content tends to be generic and surface-level. If a review mentions a specific scenario, an unexpected use case, or a genuine complaint, that’s a good sign it came from a human. Humans are messy. AI is smooth.

Check the author. Real content creators usually have a track record, a voice that’s consistent across posts, and details that don’t exist in a vacuum. A name, a bio, other work you can cross-reference. If the author feels interchangeable, the content might be too.

Watch for engagement patterns. Thousands of comments that all say some variation of “Beautiful!” or “Amen!” or “So inspiring!” on a Facebook post are a red flag. Real engagement is uneven, argumentative, off-topic, sometimes weird. Uniformity is the tell.

Be skeptical of perfection. AI content tends to be well-structured, grammatically clean, and completely devoid of personality. Human writing has rough edges, strong opinions, and occasionally bad takes. If something reads like it was optimized by a committee, it probably was. Just not a human one.

Use detection tools carefully. AI content detectors exist and are improving, but none of them are 100% accurate. They’re a useful signal, not a verdict.

The Internet Isn’t Dead Yet

The full-blown conspiracy version of the Dead Internet Theory, that the entire internet was secretly killed and replaced by AI, remains an exaggeration. Real people are still creating, arguing, building things, and posting photos of their lunch. But the proportions have shifted dramatically, and the trajectory is clear.

The internet we grew up with, chaotic, human, weird, full of personal homepages and forum arguments and genuine discovery, is being gradually diluted. Not by conspiracy, but by the economics of scale, the convenience of automation, and the reality that generating content is now nearly free while creating something original still costs time and thought.

The Dead Internet Theory may have started as paranoid speculation, but in 2026, it reads more like an early warning that we didn’t take seriously enough.

Where to Learn More

If this topic grabbed you, there are a few places worth spending time. The original source material is still online and surprisingly readable for something that launched a conspiracy theory. The data behind the bot traffic and AI content claims comes from annual reports that are worth bookmarking if you care about what’s happening to the web.

- Dead Internet Theory: Most Of The Internet Is Fake – The original 2021 forum post by IlluminatiPirate on Agora Road’s Macintosh Cafe that started the whole conversation. Reading it now, knowing what’s happened since, is a different experience than it was in 2021.

- Maybe You Missed It, but the Internet ‘Died’ Five Years Ago – Kaitlyn Tiffany’s 2021 Atlantic article that brought the theory to a mainstream audience. Still one of the best pieces written on the subject.

- 2025 Imperva Bad Bot Report – The annual report that confirmed bots now generate 51% of all web traffic. Imperva has been tracking this for over a decade, and the trend lines are not encouraging.

- The Dead Internet Theory – The Why Files (YouTube) – AJ does a great deep dive on this topic. The video has over 3 million views and it’s one of the better explainers out there. If you prefer watching over reading, start here.

- 74% of New Webpages Include AI Content – Ahrefs’ analysis of 900,000 newly created web pages. The methodology is transparent and the findings are sobering. If you only read one data-driven piece on AI content proliferation, make it this one.